Steve Jobs didn't develop the iPad, his employees did.

The LED and computer microchip were developed by NASA.

Wrong, they were developed by Bell Labs and Texas Instruments.

WTF, do either of you know how Google works??

[Excerpt]

Electroluminescence as a phenomenon was discovered in 1907 by the British experimenter

H. J. Round of

Marconi Labs, using a crystal of

silicon carbide and a

cat's-whisker detector.

[11][12] Russian

Oleg Losev reported creation of the first LED in 1927.

[13] His research was distributed in Russian, German and British scientific journals, but no practical use was made of the discovery for several decades.

[14][15] Rubin Braunstein

[16] of the

Radio Corporation of America reported on infrared emission from

gallium arsenide (GaAs) and other semiconductor alloys in 1955.

[17] Braunstein observed infrared emission generated by simple diode structures using

gallium antimonide (GaSb), GaAs,

indium phosphide (InP), and

silicon-germanium (SiGe) alloys at room temperature and at 77 kelvins.

In 1957, Braunstein further demonstrated that the rudimentary devices could be used for non-radio communication across a short distance. As noted by Kroemer

[18] Braunstein".. had set up a simple optical communications link: Music emerging from a record player was used via suitable electronics to modulate the forward current of a GaAs diode. The emitted light was detected by a PbS diode some distance away. This signal was fed into an audio amplifier, and played back by a loudspeaker. Intercepting the beam stopped the music. We had a great deal of fun playing with this setup." This setup presaged the use of LEDs for optical communication applications.

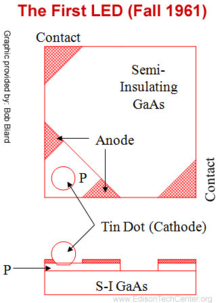

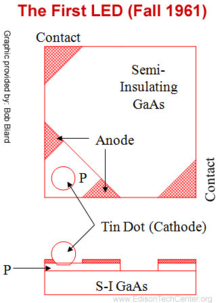

Diagram of the tunnel diode constructed on a zinc diffused area of gallium arsenide semi-insulating substrate

In the fall of 1961, while working at

Texas Instruments Inc. in

Dallas, TX,

James R. Biard and Gary Pittman found that

gallium arsenide (GaAs) emitted

infrared light when electric current was applied. On August 8, 1962, Biard and Pittman filed a patent titled "Semiconductor Radiant Diode" based on their findings, which described a zinc diffused

p–n junction LED with a spaced

cathode contact to allow for efficient emission of

infrared light under

forward bias.

[/Excerpt]

RCA would be before both of your time's ...............................

TI ring any bells??

[Excerpt]

History

The advent of low-cost computers on integrated circuits has transformed modern society. General-purpose microprocessors in

personal computers are used for computation, text editing, multimedia display, and communication over the

Internet. Many more microprocessors are part of

embedded systems, providing digital control over myriad objects from appliances to automobiles to

cellular phones and industrial process control.

The first use of the term "microprocessor" is attributed to

Viatron Computer Systems describing the custom integrated circuit used in their System 21 small computer system announced in 1968.

Intel introduced its first

4-bit microprocessor 4004 in 1971 and its 8-bit microprocessor 8008 in 1972. During the 1960s, computer processors were constructed out of small and medium-scale ICs—each containing from tens of

transistors to a few hundred. These were placed and soldered onto printed circuit boards, and often multiple boards were interconnected in a chassis. The large number of discrete

logic gates used more electrical power—and therefore produced more heat—than a more integrated design with fewer ICs. The distance that signals had to travel between ICs on the boards limited a computer's operating speed.

In the

NASA Apollo space missions to the

moon in the 1960s and 1970s, all onboard computations for primary guidance, navigation and control were provided by a small custom processor called "The

Apollo Guidance Computer". It used wire wrap circuit boards whose only

logic elements were three-input

NOR gates.

[8]

The first microprocessors emerged in the early 1970s and were used for electronic

calculators, using

binary-coded decimal (BCD) arithmetic on 4-bit

words. Other

embedded uses of 4-bit and 8-bit microprocessors, such as

terminals,

printers, various kinds of

automation etc., followed soon after. Affordable 8-bit microprocessors with

16-bit addressing also led to the first general-purpose

microcomputers from the mid-1970s on.

Since the early 1970s, the increase in capacity of microprocessors has followed

Moore's law; this originally suggested that the number of components that can be fitted onto a chip doubles every year. With present technology, it is actually every two years,

[9] and as such Moore later changed the period to two years.

[10]

[/Excerpt]

Never heard of Viatron eithre right??

[Excerpt]

Viatron

From Wikipedia, the free encyclopedia

Viatron Computer Systems

Viatron Computer Systems, or simply

Viatron was an American computer company headquartered in

Bedford, Massachusetts, and later

Burlington, Massachusetts. Viatron may have coined the term

"microprocessor".

[1]

Viatron was founded in 1967 by engineers from

Mitre Corporation led by Dr. Edward M. Bennett and Joseph Spiegel. In 1968 the company announced its

System 21 small computer system together with its intention to lease the systems starting at a revolutionary price of $40 per month. The basic system included a microprocessor with 512 characters of read/write RAM memory, a keyboard, a 9-inch (23 cm) CRT display and two cartridge tape drives.

[2]

The system specifications, advanced for 1968 – five years before the advent of the first commercial personal computers – caused a lot of excitement in the computer industry. The System 21 was aimed, among others, at applications such as

mathematical and

statistical analysis, business

data processing,

data entry and media conversion, and educational/classroom use.

The expectation was that the use of new

large scale integrated circuit technology (LSI) and volume would enable Viatron to be successful at lower margins, however the prototype did not incorporate LSI technology. In 1960 Bennett claimed that by 1972 Viatron would have delivered more "digital machines" than had "previously been installed by all computer makers." He declared "We want to turn out computers like GM turns out Chevvies,"

[3]

The semiconductor industry was unable to produce circuits in the volumes required, forcing Viatron to sell fewer than the planned 5,000–6,000 systems per month. This raised the production costs per unit and prevented the company from ever achieving profitability.

Bennett was fired in 1970, and the company declared Chapter XI

bankruptcy in 1971.

[1]

[

/Excerpt]