Bitterhook

Diamond Member

- Nov 13, 2025

- 1,900

- 1,281

- 1,903

If AI ever develops true "intelligence" I will be surprised. Most computers run on the old binary, "0" or "1". The newer Quantum Computers have a different architecture.

But, neither one can "think" or "reason", they just run algorithms, search routines, and then evaluate the results, and move on to the next iteration.

After a time they either identify a trend toward a solution, or see the trail getting colder, and move on to better parameters to evaluate.

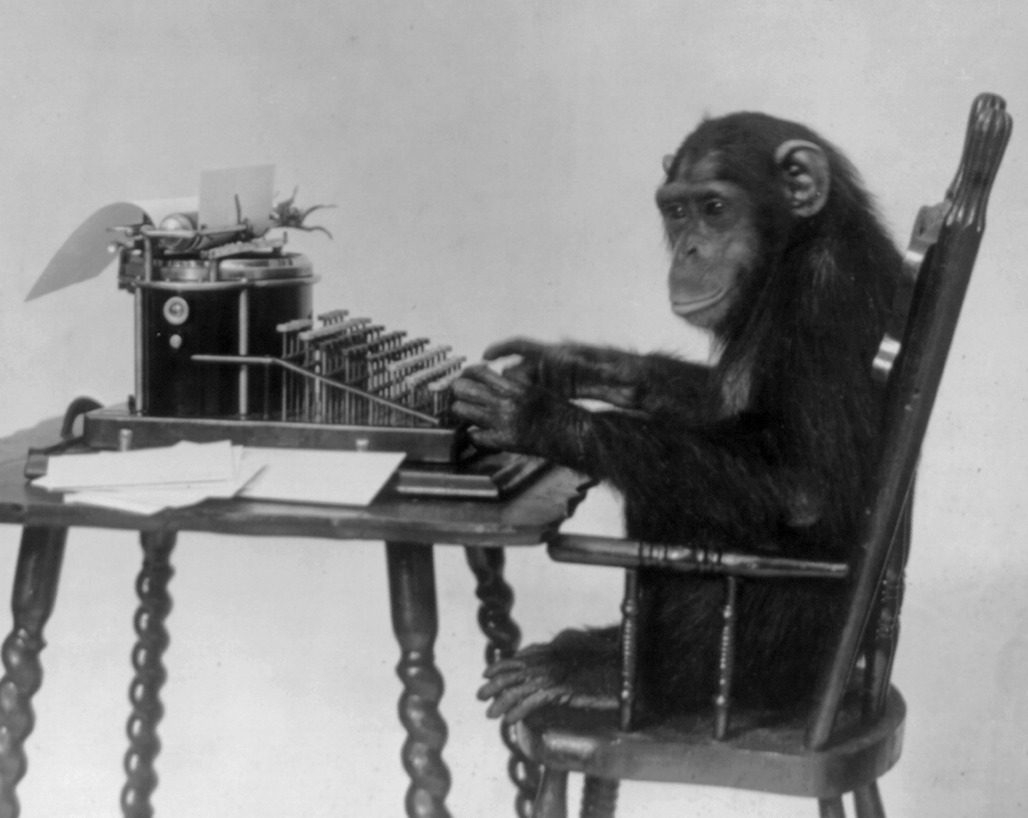

This methodology reminds me of the Monkey Theorem first mentioned by Aristotle and Cicero.

Agreed that the number of monkeys is not infinite, nor that the time allotted is infinite, but the speed of computers mimics "infinite time and infinite monkeys", and time will tell if this "pseudo super-intelligence"can actually solve heretofore unsolvable problems not with super-intelligence, but with the Infinite Monkey Theorem.

One of the earliest instances of the use of the "monkey metaphor" is that of French mathematician Émile Borel in 1913,<a href="Infinite monkey theorem - Wikipedia"><span>[</span>1<span>]</span></a> but the first instance may have been even earlier. Jorge Luis Borges traced the history of this idea from Aristotle's On Generation and Corruption and Cicero's De Natura Deorum (On the Nature of the Gods), through Blaise Pascal and Jonathan Swift, up to modern statements with their iconic simians and typewriters.<a href="Infinite monkey theorem - Wikipedia"><span>[</span>2<span>]</span></a> In the early 20th century, Borel and Arthur Eddington used the theorem to illustrate the timescales implicit in the foundations of statistical mechanics.

Infinite monkey theorem - Wikipedia

en.wikipedia.org

Google's DeepMind AI Lab developed an AI program called Alpha Zero that taught itself how to play chess in 9 hours. It then played other computers and people to refine its skills until it could beat a Grandmaster. It constantly adapted its style and developed its own strategies.

All that sounds nice, until the developers went back and examined what the program had done to be so successful in its learning, adapting and developing strategies. During their review, it reached a point that the developers couldn't figure out why the AI program was doing what it was doing, and eventually they could not make heads or tails out of how the program was teaching itself.

So, if we make something smart enough to learn, at what point does it learn something on its own, and in a manner we cannot control? That might be more than possible now, because the Alpha Zero program still only wanted to win at Chess (taught itself in 9 hours), Shogi (taught itself in 12 hours), and Go (taught itself in 13 days), but it wouldn't be the first time we have messed with Pandora's Box.