There’s a major developing situation right now between Anthropic (Claude AI), the U.S. Department of Defense, and the federal government that goes deeper than ordinary tech industry drama, and it touches on serious questions about AI ethics, surveillance, military use, and corporate values.

The U.S. government has ordered agencies to stop using Anthropic’s AI. President Trump directed all U.S. government agencies to stop using Anthropic’s technology after a high‑profile dispute over how Anthropic’s AI can be used.

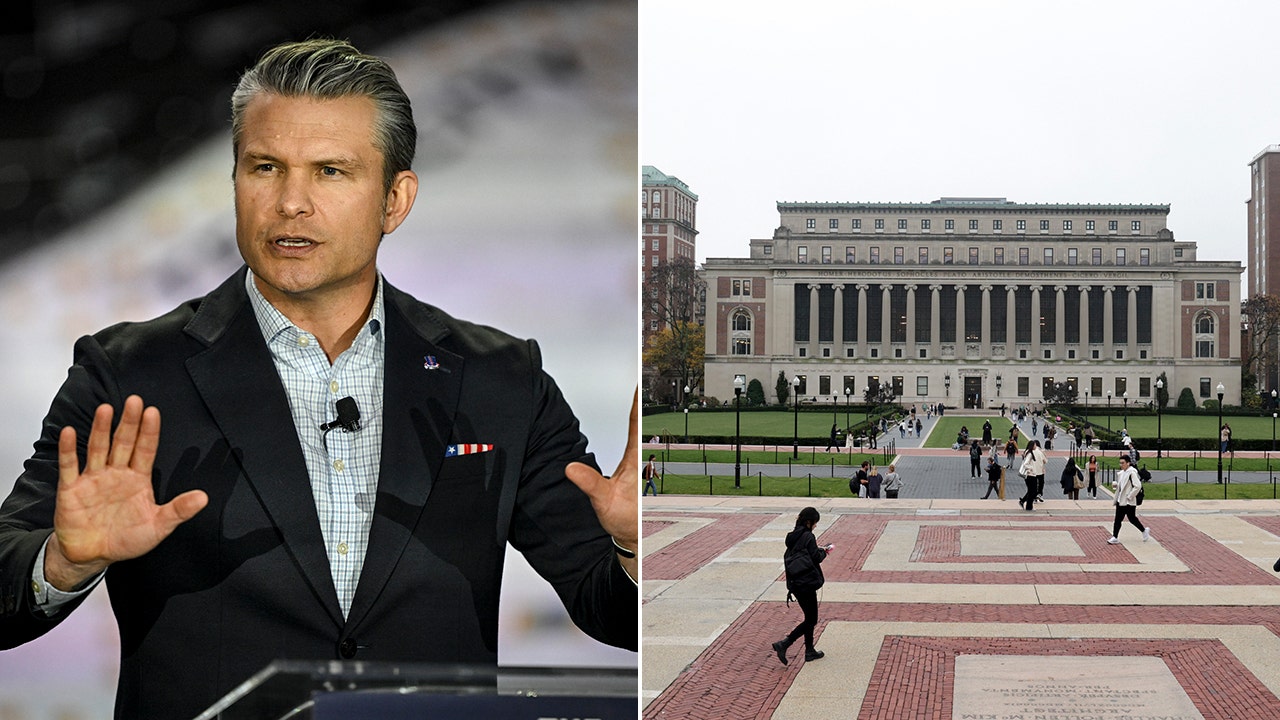

Secretary of Defense Pete Hegseth designated Anthropic as a “supply chain risk to national security,” a designation usually reserved for foreign adversaries. This blocks military contractors from using Anthropic’s technology.

The Trigger? A refusal to remove safety guardrails. Anthropic refused Pentagon demands to remove safeguards in its AI that prohibit its use for mass domestic surveillance or fully autonomous weapons without human control. Anthropic says it won’t agree in good conscience to those provisions.

Anthropic is fighting back. They have stated it will challenge any supply chain risk designation in court and that such a label is unprecedented for a U.S. company negotiating ethical limits.

This is a battle over ethical limits vs military access. Federal reports say the Pentagon wants AI models it can use for any lawful purpose without restrictions, while Anthropic wants written assurances that its AI won’t be weaponized or used to mass‑surveil citizens.

This isn’t just a contract dispute; it’s a symbolic clash between corporate ethical commitments and governmental demands for unrestricted use of powerful AI. The government is basically asserting that companies shouldn’t impose ethical limits on how military or federal actors can use AI.

Anthropic’s refusal to remove safeguards is rare and has drawn industry support from AI researchers and engineers worried about misuse. If the government can force companies to comply, it sets a precedent that could affect every AI vendor and how safety standards are enforced in practice.

This story is actively unfolding right now, and the implications go beyond Anthropic. They touch on regulation, civil liberties, and the future of responsible AI deployment.

If you care about the future of AI ethics and don't want it to be weaponized against our citizens and humanity in general, this is an important moment.

OpenAI (ChatGPT) has already signaled alignment and a willingness to work with the demands of the government.

Defense Secretary Pete Hegseth deemed artificial intelligence firm Anthropic a supply chain risk on Friday, following days of increasingly heated public conflict with the AI company.

www.cbsnews.com

Hegseth also said he was designating Anthropic as a supply chain risk, a move that could prevent U.S. military vendors from working with the company.

www.defensenews.com

Hours after exclusion of Anthropic, OpenAI announces fresh Pentagon deal, but says it will maintain same safety guardrails at the heart of the dispute

www.theguardian.com