In an earlier thread I outlined the behavior of the human brain's visual system in terms of differential geometry and the extraction of information about surfaces.

But now, scientists at MIT have come one step closer, they've succeeded in matching the visual map with the motor map.

In humans, this relates to our ability to reach behind objects for other objects. We reach "around" the obstacle, and in most cases one trajectory is as good as another.

MIT scientists have duplicated this feat with machine learning. And, the mechanism is surprisingly simple.

www.livescience.com

www.livescience.com

In humans, the "world map" is generated in the temporal pole, in and around the hippocampus. Mappings from the various sensory and motor modalities "align" there.

The MIT scientists merely had to align the Jacobian map from the visual system, with the Jacobian map from the motor system. Throw in a learning algorithm, and the rest happens by itself.

But now, scientists at MIT have come one step closer, they've succeeded in matching the visual map with the motor map.

In humans, this relates to our ability to reach behind objects for other objects. We reach "around" the obstacle, and in most cases one trajectory is as good as another.

MIT scientists have duplicated this feat with machine learning. And, the mechanism is surprisingly simple.

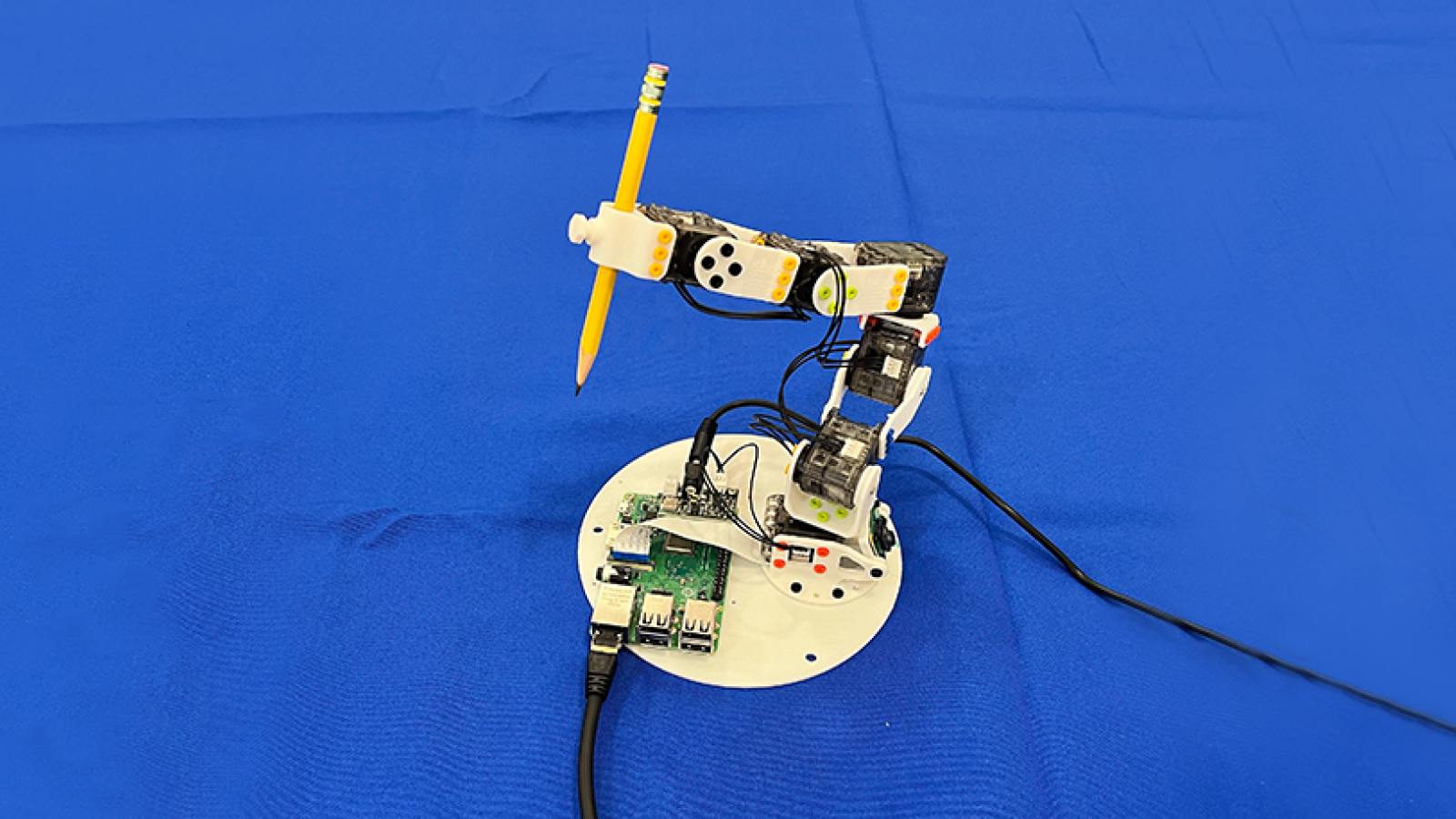

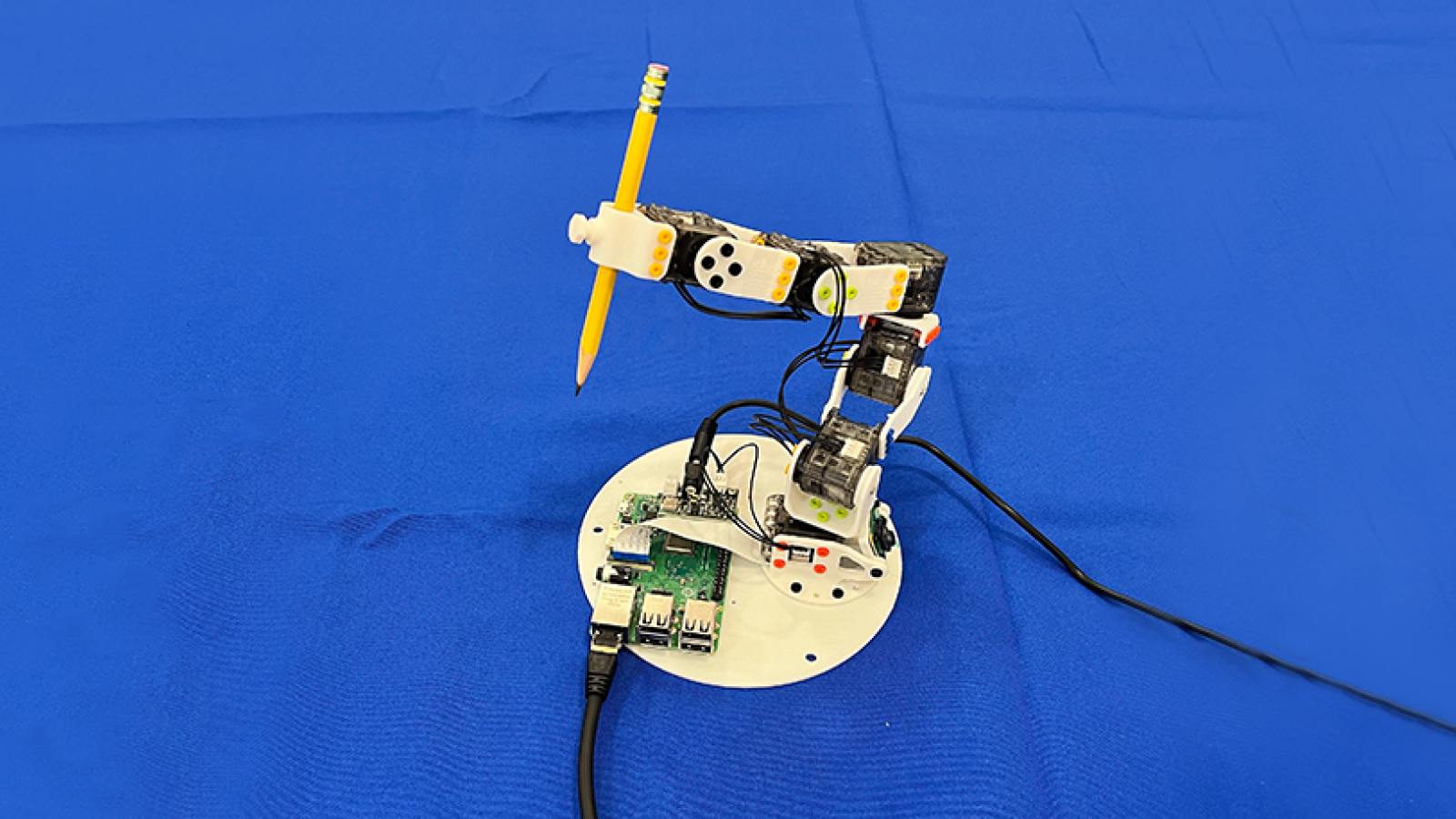

MIT's new AI can teach itself to control robots by watching the world through their eyes — it only needs a single camera

The new training method doesn't use sensors or onboard control tweaks, but a single camera that watches the robot's movements and uses visual data.

In humans, the "world map" is generated in the temporal pole, in and around the hippocampus. Mappings from the various sensory and motor modalities "align" there.

The MIT scientists merely had to align the Jacobian map from the visual system, with the Jacobian map from the motor system. Throw in a learning algorithm, and the rest happens by itself.