So, here's why alignment is important.

Barn owls locate their prey in the dark, based only on the sounds they make. The location is determined by the "inter-aural time difference", sounds from the left will hit the left ear first. Owls can resolve time differences of only 5 microseconds, from neurons whose resolution is at best 2 msec.

How do they do this? This has been intensively studied, by Mark Konishi at Caltech. It has to do with a neural architecture that resembles a delay line, but it's much more complicated than that. It involves phase locking of auditory receptors and a "head related transfer function" based on the size and shape of the individual head.

But here's the thing - at some point a tonotopic frequency map coming out of the ear, is converted to a

spatial map in the midbrain. And here's the amazing stuff: this map

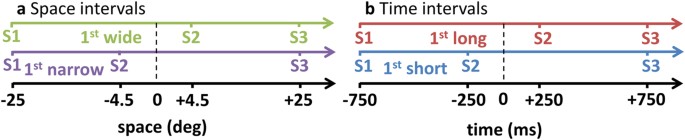

self organizes. Not only that, but during a critical period lasting about 200 days starting at a couple weeks of age, the auditory spatial map will align with a visual map from a nearby brain area. And, we can control how those two maps align, by putting prisms on the eyes. If we shift the visual field by 20 degrees during the critical period, then the owl's auditory localization capability in darkness will be off by 20 degrees.

A similar process occurs in humans. The formation of a unified spatial map of the external world occurs very early in evolution, it's in the midbrain (no cerebral cortex required), and it's highly conserved from fish upwards. Here's what it looks like in humans:

View attachment 1192477

You see the little red dot there, and you see the white area surrounding it. The white area looks like it has two little bumps on the left side (which would be the back of the brain). These are the superior and inferior colliculi (you're only looking at half the brain, so there's actually four bumps, two on each side).

The little red dot is the

inferior colliculus, which is the one that deals with hearing. Just above it is the

superior colliculus, which deals with vision. Between the superior and inferior neurons, are connections that map the visual field to the auditory field. These connections self organize. The result can be manipulated by changing the statistics of the environmental input.

This is a

model system, and we're studying this for a reason. Because in both owls and humans (which can both localize sounds with approximately equal precision), there is a next step. In the colliculi in the brainstem, the synaptic weights resulting from the self organization process end up hard wired. But what if they weren't? What if we could change the visual-to-auditory mapping by dynamically changing the synaptic weights?

We need this capability because of navigation. Think of a rat navigating a maze to a reward, where the maze has several entry points, and the rat enters randomly at one of them. Same maze, same reward location, just different entry point. You can train the rat on three entry points, and then introduce a brand new fourth point, and show that the rat is mentally rotating existing models to find a fit. (Not only that, but it "superstitiously" prefers trained choices while it's searching, often attempting learned sequences).

Vision and touch map input to a sensory spatial surface, but hearing has to recreate that surface from binaural time differences. What is most interesting about the barn owl is it can localize sounds behind itself (it can turn its head almost 180 degrees), where there is no visual input. So what would you guess, does the spatial map coming out of the inferior colliculus contain the "behind" region or not?

techxplore.com