Here is the first piece of serious engineering. Amazingly enough, no one really knows how the integrator works. So we have two issues that are really part of the same problem. First, we have the topographic map that needs a reference. And second, we have a neural behavior that translates position to burst rate.

From a machine learning standpoint, the first part is easier. It's kinda sad reading through the biology literature about the second part though, the biologists don't know from engineering and just wave their hands over the entire issue. Like, "this signal goes one way and the other signal goes the other way", just kinda assuming that it's all possible and the engineers will eventually figure it out.

So here's a little input about the first part, the alignment of maps. In young birds this takes about 100 days, in a machine it can be done in less than a day.

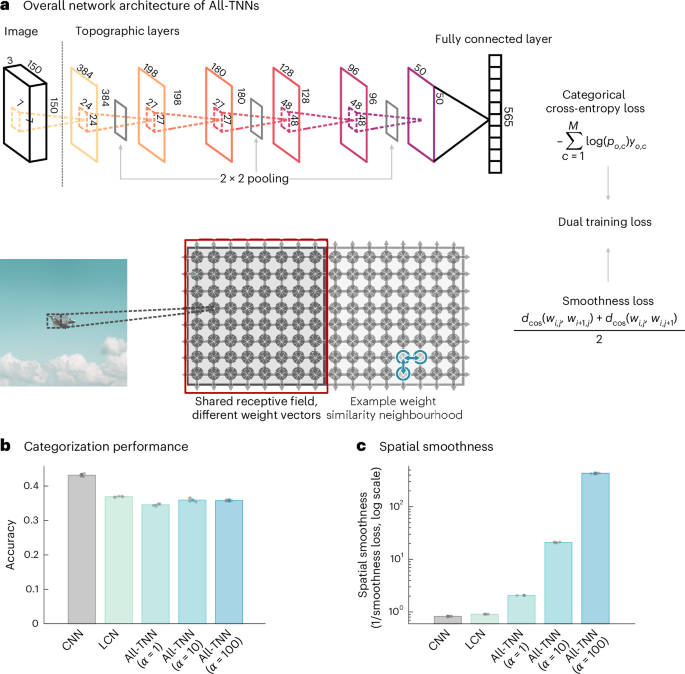

Lu et al. introduce all-topographic neural networks as a parsimonious model of the human visual cortex.

www.nature.com

The second part is intriguing. There are multiple ways to accomplish it, in a neural network, but none of them are necessarily easy. We want a burst rate that's proportional to the velocity of the saccade, that's what the PPRF neurons encode - and saccade velocity is proportional to eccentricity (ie location) on the retina. The trouble is, that bursting is related to the intrinsic membrane properties of neurons. The input doesn't burst, the neuron somehow translates eye position to spike rate based on the behavior of synaptically controlled ion channels.

The machine learning view tells us we should be very careful in defining what we want. An eye movement has an amplitude, a direction, and a velocity - and those are derived from the starting location (empirical) and ending location (desired, predicted). From a control systems viewpoint we have ONE muscle that can be driven by ONE neuron and all it has to do is correctly calculate the rate. But this is not at all how neurons work! Right?

The real muscle has a few thousand muscle fibers, and the more of them that get engaged the more contraction you get. Each individual muscle fiber is noisy and unreliable, but together the population averages out the hiccups and you get a nice smooth Gaussian. In this case the eye movement velocity is related to the mean of the Gaussian, because that determines how many muscle fibers are contracting on average, at the same time.

So one way you could do this, is to have short unidirectional connections between neurons. Another way is with a crossbar. A third way is to have the network specifically control channel conductances in a very small population of highly connected integrators. All we really need is a signal of the form

integral from 0 to a, of x dx

Where a is the target position on the retina. The result will then be multiplied by some scaling factor to keep the eye movement within bounds (often this is an S shaped curve or logistic function).

From a biological standpoint, it's too risky and unreliable to have our network calculate channel conductances in specific neurons. The easiest and most reliable way to do this

given that the starting position is always the origin is to have every source connected to every destination, and simply add up all the active destination elements under the following rule:

if the target is closer to the origin than the current position, output 0, else output 1

So let's say we're making an eye movement 10 degrees to the right, and the limit of our visual field is 100 degrees. The logic says the first 10% of our neurons should be active, resulting in a total output of 10. On the other hand, if we're moving 20 degrees to the right we get a total output of 20, and so on. We end up with a nice linear relationship between target position and output activity. And, the decision for each neuron is entirely local, making it biologically plausible. And, note that topography is no longer needed at this point, we just end up with a single number that says "amount" by which to contract the muscle.

Now that we have a basic mechanism, we can fit it into what we already know about the biology, and see if it works in terms of predicting the known neuron types and behaviors, and the various neural and biochemical pathologies. And it does work, the only odd man out is the "omnipause" neuron that plays a specific role we haven't defined yet (but we will).

Once we have a basic integrator we can build a network. So let's do that. We will define our SC (superior colliculus) to be 320x320, and our retina can be the same since there's no need for higher precision at this point. Then to get the X axis, we'll have 320 neurons that map eccentricity using a "one-hot" code, in other words, the eye can only be in one place at a time, and we only need one neuron to show where it is. And finally, to get the burst rate we determine "how many neurons are medial to the selected position". Easy peasy, and trivial to replicate to the Y axis.

As far as neural "place codes" go, this is probably the easiest one. It can be done linearly, or it can be done statistically using a population code (which is probably closer to what we actually want, since we already know PPRF is a population and not a single neuron). In a population context, each neuron simply makes the decision "am I closer to the midline than the target", and we simply add up all the neurons that say yes.