To follow up - for those of you interested in AI - you probably already know about TDRNN which means "time delay recurrent neural network".

These are most often used for stochastic control problems. They're very popular in economics, for example they tell us which index's volatility has the greatest impact on a stock.

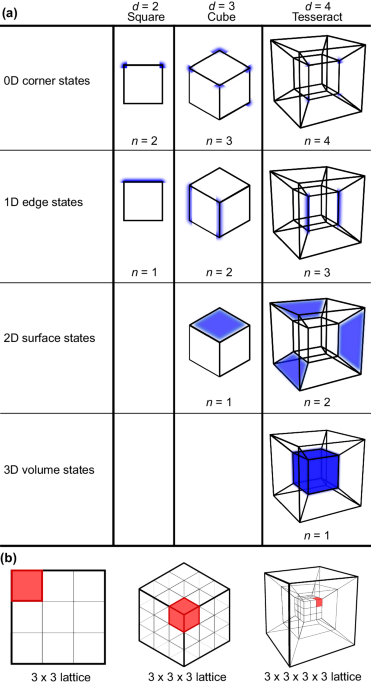

But here's the new and clever piece - the time delays can be mapped with Information Geometry.

Here is what a TDRNN looks like:

Notice the tiny boxes on the right that say "delay box". With an appropriate network construction (like the one discussed in this thread), we can map the time delays into what basically amounts to a shit register, like this:

So you can envision the signal going from one neuron, to the next, to the next - with a small delay at each step.

However the same thing can be accomplished with the "delay boxes", if each box has a slightly different delay.

Let's say we have a collection of increasing stochastic delays. It turns out, the network structure is formally identical to an array of Gaussians with increasing mean.

To understand how this looks, we invoke information geometry. A gentle introduction to information geometry is on the slides in this link:

yosinski.com

Instead of mapping a Gaussian reference frame, we use time delays - so we are basically "shifting each curve by the amount of the delay. We can do this in two dimensions, so we have the Gaussian on one axis and the delay on the other.

When we compactify this structure, we get a three dimensional Hawaiian earring - two dimensions of which are an actual earring, like this:

The third dimension is our shift - which is very hard to visualize, but a slice of it looks something like this - only instead of a cone we have a Gaussian.

So now, here's why this matters. Here is the world's simplest compactified neural network, it's called a "monosynaptic relex arc".

The question is: "where is the origin"? What defined "now"? We can rotate this arc so "now" can be anywhere, but we DEFINE "now" to be the sensorimotor interface, in other words, it's in the muscle spindle, between the sensory fiber and the contractile element. Here's what that looks like:

This way, the "now" that lives in the motor neuron, "covers" the activity in the muscle. And every "now" that's above it in the more central reflex loops, also covers it - the only difference is that the more central "now" has a greater delay. So we end up with a map of delays, which when they're aligned "cover" real time just like a set of Lebesgue pancakes. Therefore any action generated centrally that rolls out through the network, can be mapped and tracked using information geometry. We end up with a time to space mapping, a snapshot of which is the rollout.

This is how the brain learns its own internal activity. For instance, the egocentric reference frame requires an alignment of all 5 senses in both space and time. Well, this is how it's done. The entire snapshot in 3 dimensions can be phase encoded into the firing pattern of a single neuron - which can then be auto encoded (self organized) back into the specific locations and behaviors of each sensory and motor modality.

This is how the frontal lobe encodes "context" for a cognitive map. (In the hippocampus or anywhere else). The whole structure basically becomes a giant associative memory, which can be realized using LSTM and TTFS and whatever other learning methods are available

The topology of the earring structure is non trivial. It has a non trivial fundamental group. In discrete form it has a fractal structure, while in continuous form it becomes a Hilbert space.

neurosciencenews.com

neurosciencenews.com